By Dr. Robert Buccigrossi, TCG CTO

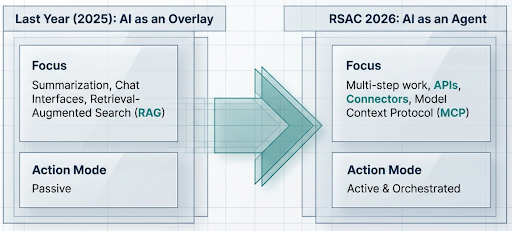

At the 2026 RSA Conference, the dominant phrase was “Agentic AI.” Last year, nearly every company was eager to sprinkle AI over existing products, usually in the form of summarization, chat interfaces, or retrieval-augmented search. This year was different. Vendors were talking about AI performing multi-step work through tools, APIs, connectors, and orchestration layers. RSAC itself previewed Agentic AI and Model Context Protocol (MCP) as major themes, and that matched what I heard repeatedly on the floor. To me, there were two key trends under the broader theme of Agentic AI.

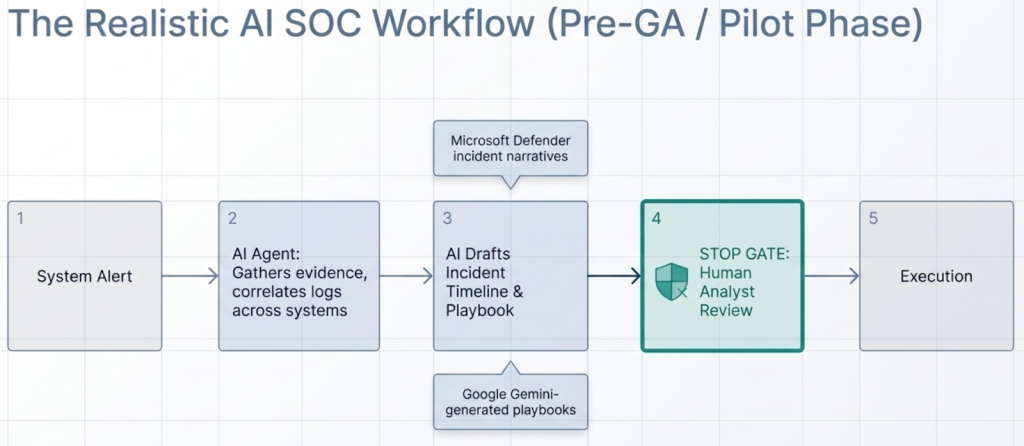

AI SOC: Security Operations with AI Agents in the Loop — The first major trend was what many vendors described as the “AI SOC” or “agentic SOC”. The idea is appealing. Instead of just summarizing alerts or answering questions about logs, AI agents would help conduct investigations, gather evidence, correlate data across systems, draft incident timelines, and even help build response playbooks.

That said, a truly autonomous AI SOC is not here yet. Much of what I saw looks like early productization, guided workflows, or carefully staged demos rather than mature autonomous operations. Cisco and Splunk framed this as a “human-led, machine-accelerated SOC”, which is probably the most credible way to describe the current state of the technology.

Microsoft’s Security Copilot promptbooks are a good example of something promising and practical. They allow repeatable investigation flows and structured prompts to support analysts, but Microsoft is explicit that outputs should be reviewed by humans. Microsoft’s incident report capabilities in Defender are also useful because they consolidate actions, classifications, and next steps into something readable. This is where generative AI shines: synthesizing data, producing narratives, and helping analysts move faster. It is support for analysts, not a replacement for them.

Google’s direction is similarly promising. Google Cloud has been openly describing an “Agentic SOC” in which AI accelerates triage and investigation while humans remain in charge. Its Triage and Investigation Agent exposes evidence, reasoning, confidence, and suggested next steps rather than pretending to be an oracle. Google’s documentation for Gemini-generated playbooks, which are marked Pre-GA and are explicitly designed for analysts to review step by step before execution. To me, this is the right kind of realism. There is real value in having AI draft a playbook or assemble the context for a response. There is much less evidence that we should be turning incident response over to autonomous agents anytime soon.

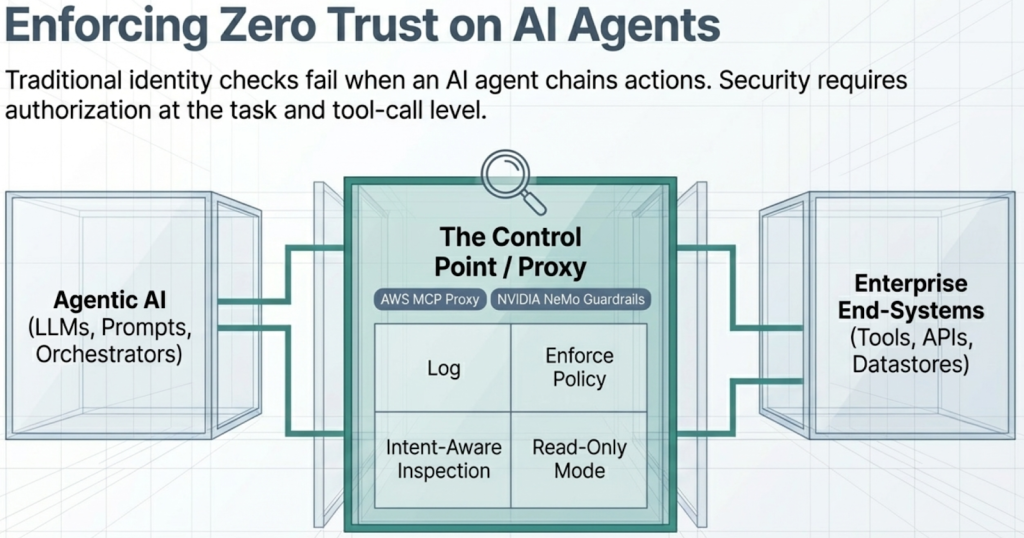

Protecting Against Agentic AI: Keeping Digital Co-Workers on a Leash — The second major trend was the flip side of the first: if AI agents are going to act across systems, how do we protect the enterprise from those agents being over-privileged, manipulated, or simply wrong? If an agent can access data, query systems, write tickets, run scripts, or invoke downstream tools, then the security problem is no longer just whether the agent has an identity. The security problem is what that agent is allowed to do, why it is allowed to do it, and how an organization can observe or stop it midstream.

Cisco’s messaging on this point was notable. In its AI Defense expansion announcement, Cisco described the need for MCP visibility, logging, policy control, and “intent-aware inspection” of tool requests and agent interactions. Stripped of the marketing language, the underlying point is sound. Traditional identity checks are not enough when an AI agent can perform chains of actions. What matters is authorization at the task and tool-call level. This is why “zero trust” came roaring back into the discussion this year as a key architectural approach to protecting against agentic AI.

The standards and guidance communities are already pointing in the same direction. OWASP’s Top 10 for LLM Applications explicitly calls out “Excessive Agency” as a top risk. That’s an elegant phrase for a critical problem: if you give an LLM-driven system too much freedom to act, especially through tools, you have created a security vulnerability. The Model Context Protocol security guidance is also revealing because it documents risks such as confused deputy problems in MCP proxy servers. In other words, even the protocol ecosystem is already warning implementers where the failures are likely to occur. The NIST SP 800–207 and CISA’s Zero Trust Maturity Model are highly relevant because they emphasize least privilege, granular authorization, and per-request decision making. Those ideas map naturally to the control of non-human actors.

One emerging approach to keeping AI agents in line is to keep them from directly talking to anything. AWS has already released an MCP Proxy for AWS with features like logging and read-only mode. NVIDIA’s NeMo Guardrails offers a layer of programmable runtime controls. Vendors at RSAC demonstrated proxies for LLMs, MCPs, and tools. The theme is: put a control point in the middle, inspect requests and responses, enforce policy, and generate an audit trail.

My takeaway from RSAC 2026 is that Agentic AI security operations are progressing, but are still much closer to supervised pilot than autonomous reality. The story is compelling when it is framed honestly: AI is helping analysts investigate, correlate, summarize, and draft, with humans accountable for actions. The defense against Agentic AI is just as important: if agents become digital co-workers, then they must be governed like powerful insiders with limited privileges, monitored actions, and constrained authority. The companies that matter over the next year or two will not be the ones with the flashiest demos. They will be the ones that can show that their agents are observable, governable, least-privileged, and interruptible. It’s not the most exciting marketing message from RSAC 2026, but it’s probably the most important one.